Pandas是一款开放源码的BSD许可的Python库,为Python编程语言提供了高性能,易于使用的数据结构和数据分析工具。Pandas用于广泛的领域,包括金融,经济,统计,分析等学术和商业领域。

Pandas的主要特点:

- 快速高效的DataFrame对象,具有默认和自定义的索引。

- 将数据从不同文件格式加载到内存中的数据对象的工具。

- 丢失数据的数据对齐和综合处理。重组和摆动日期集。

- 基于标签的切片,索引和大数据集的子集。

- 可以删除或插入来自数据结构的列。

- 按数据分组进行聚合和转换。

- 高性能合并和数据加入。时间序列功能。

Pandas处理以下三个数据结构

- Series

- DataFrame

- Panel

import pandas as pd |

Series

s = pd.Series([1,3,6,np.nan,44,1]) |

0 1.0

1 3.0

2 6.0

3 NaN

4 44.0

5 1.0

dtype: float64

Series的字符串表现形式为:索引在左边,值在右边。由于我们没有为数据指定索引。于是会自动创建一个0到N-1(N为长度)的整数型索引。

DataFrame

dates = pd.date_range('20130101', periods=6) |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | 0 | 1 | 2 | 3 |

| 2013-01-02 | 4 | 5 | 6 | 7 |

| 2013-01-03 | 8 | 9 | 10 | 11 |

| 2013-01-04 | 12 | 13 | 14 | 15 |

| 2013-01-05 | 16 | 17 | 18 | 19 |

| 2013-01-06 | 20 | 21 | 22 | 23 |

DataFrame是一个表格型的数据结构,它包含有一组有序的列,每列可以是不同的值类型(数值,字符串,布尔值等)。DataFrame既有行索引也有列索引, 它可以被看做由Series组成的大字典。

选择数据

筛选

df['A'] |

2013-01-01 0

2013-01-02 4

2013-01-03 8

2013-01-04 12

2013-01-05 16

2013-01-06 20

Freq: D, Name: A, dtype: int64

df.A |

2013-01-01 0

2013-01-02 4

2013-01-03 8

2013-01-04 12

2013-01-05 16

2013-01-06 20

Freq: D, Name: A, dtype: int64

df[0:3] |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | 0 | 1 | 2 | 3 |

| 2013-01-02 | 4 | 5 | 6 | 7 |

| 2013-01-03 | 8 | 9 | 10 | 11 |

df['20130102':'20130104'] |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-02 | 4 | 5 | 6 | 7 |

| 2013-01-03 | 8 | 9 | 10 | 11 |

| 2013-01-04 | 12 | 13 | 14 | 15 |

df[2:2] |

| A | B | C | D |

|---|

df['20130102':'20130102'] |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-02 | 4 | 5 | 6 | 7 |

标签筛选

df.loc['20130102'] |

A 4

B 5

C 6

D 7

Name: 2013-01-02 00:00:00, dtype: int64

df.loc[:,['A','B']] |

| A | B | |

|---|---|---|

| 2013-01-01 | 0 | 1 |

| 2013-01-02 | 4 | 5 |

| 2013-01-03 | 8 | 9 |

| 2013-01-04 | 12 | 13 |

| 2013-01-05 | 16 | 17 |

| 2013-01-06 | 20 | 21 |

df.loc['20130102',['A','B']] |

A 4

B 5

Name: 2013-01-02 00:00:00, dtype: int64

df.loc['20130102'] |

A 4

B 5

C 6

D 7

Name: 2013-01-02 00:00:00, dtype: int64

序列筛选

df.iloc[3,1] |

13

df.iloc[3:5,1:3] |

| B | C | |

|---|---|---|

| 2013-01-04 | 13 | 14 |

| 2013-01-05 | 17 | 18 |

df.iloc[[1,3,5],1:3] |

| B | C | |

|---|---|---|

| 2013-01-02 | 5 | 6 |

| 2013-01-04 | 13 | 14 |

| 2013-01-06 | 21 | 22 |

判断筛选

df[df.A>8] |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-04 | 12 | 13 | 14 | 15 |

| 2013-01-05 | 16 | 17 | 18 | 19 |

| 2013-01-06 | 20 | 21 | 22 | 23 |

设置值

df.iloc[2,2] = 1111 |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | 0 | 2222 | 2 | 3 |

| 2013-01-02 | 4 | 5 | 6 | 7 |

| 2013-01-03 | 8 | 0 | 1111 | 11 |

| 2013-01-04 | 12 | 0 | 14 | 15 |

| 2013-01-05 | 16 | 0 | 18 | 19 |

| 2013-01-06 | 20 | 0 | 22 | 23 |

df.B[df.A>4] = 0 |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | 0 | 1 | 2 | 3 |

| 2013-01-02 | 4 | 5 | 6 | 7 |

| 2013-01-03 | 8 | 0 | 10 | 11 |

| 2013-01-04 | 12 | 0 | 14 | 15 |

| 2013-01-05 | 16 | 0 | 18 | 19 |

| 2013-01-06 | 20 | 0 | 22 | 23 |

df['F'] = np.nan |

| A | B | C | D | F | |

|---|---|---|---|---|---|

| 2013-01-01 | 0 | 2222 | 2 | 3 | NaN |

| 2013-01-02 | 4 | 5 | 6 | 7 | NaN |

| 2013-01-03 | 8 | 0 | 1111 | 11 | NaN |

| 2013-01-04 | 12 | 0 | 14 | 15 | NaN |

| 2013-01-05 | 16 | 0 | 18 | 19 | NaN |

| 2013-01-06 | 20 | 0 | 22 | 23 | NaN |

df['E'] = pd.Series([1,2,3,4,5,6], index=pd.date_range('20130101',periods=6)) |

| A | B | C | D | F | E | |

|---|---|---|---|---|---|---|

| 2013-01-01 | 0 | 2222 | 2 | 3 | NaN | 1 |

| 2013-01-02 | 4 | 5 | 6 | 7 | NaN | 2 |

| 2013-01-03 | 8 | 0 | 1111 | 11 | NaN | 3 |

| 2013-01-04 | 12 | 0 | 14 | 15 | NaN | 4 |

| 2013-01-05 | 16 | 0 | 18 | 19 | NaN | 5 |

| 2013-01-06 | 20 | 0 | 22 | 23 | NaN | 6 |

处理丢失数据

dates = pd.date_range('20130101', periods=6) |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | 0 | NaN | 2.0 | 3 |

| 2013-01-02 | 4 | 5.0 | NaN | 7 |

| 2013-01-03 | 8 | 9.0 | 10.0 | 11 |

| 2013-01-04 | 12 | 13.0 | 14.0 | 15 |

| 2013-01-05 | 16 | 17.0 | 18.0 | 19 |

| 2013-01-06 | 20 | 21.0 | 22.0 | 23 |

# 去掉有 NaN 的行或列 |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-03 | 8 | 9.0 | 10.0 | 11 |

| 2013-01-04 | 12 | 13.0 | 14.0 | 15 |

| 2013-01-05 | 16 | 17.0 | 18.0 | 19 |

| 2013-01-06 | 20 | 21.0 | 22.0 | 23 |

# 将 NaN 的值用其他值代替 |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | 0 | 0.0 | 2.0 | 3 |

| 2013-01-02 | 4 | 5.0 | 0.0 | 7 |

| 2013-01-03 | 8 | 9.0 | 10.0 | 11 |

| 2013-01-04 | 12 | 13.0 | 14.0 | 15 |

| 2013-01-05 | 16 | 17.0 | 18.0 | 19 |

| 2013-01-06 | 20 | 21.0 | 22.0 | 23 |

# 判断是否有缺失数据 NaN, 为 True 表示缺失数据 |

| A | B | C | D | |

|---|---|---|---|---|

| 2013-01-01 | False | True | False | False |

| 2013-01-02 | False | False | True | False |

| 2013-01-03 | False | False | False | False |

| 2013-01-04 | False | False | False | False |

| 2013-01-05 | False | False | False | False |

| 2013-01-06 | False | False | False | False |

np.any(df.isnull()) == True |

True

合并

axis (合并方向)

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d']) |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

pd.concat([df1, df2, df3], axis=0) |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 0 | 1.0 | 1.0 | 1.0 | 1.0 |

| 1 | 1.0 | 1.0 | 1.0 | 1.0 |

| 2 | 1.0 | 1.0 | 1.0 | 1.0 |

| 0 | 2.0 | 2.0 | 2.0 | 2.0 |

| 1 | 2.0 | 2.0 | 2.0 | 2.0 |

| 2 | 2.0 | 2.0 | 2.0 | 2.0 |

# 重置 index |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 1.0 | 1.0 | 1.0 | 1.0 |

| 4 | 1.0 | 1.0 | 1.0 | 1.0 |

| 5 | 1.0 | 1.0 | 1.0 | 1.0 |

| 6 | 2.0 | 2.0 | 2.0 | 2.0 |

| 7 | 2.0 | 2.0 | 2.0 | 2.0 |

| 8 | 2.0 | 2.0 | 2.0 | 2.0 |

join (合并方式)

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d'], index=[1,2,3]) |

| a | b | c | d | |

|---|---|---|---|---|

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 0.0 | 0.0 | 0.0 | 0.0 |

pd.concat([df1, df2], axis=0, join='outer',sort=True) |

| a | b | c | d | e | |

|---|---|---|---|---|---|

| 1 | 0.0 | 0.0 | 0.0 | 0.0 | NaN |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 | NaN |

| 3 | 0.0 | 0.0 | 0.0 | 0.0 | NaN |

| 2 | NaN | 1.0 | 1.0 | 1.0 | 1.0 |

| 3 | NaN | 1.0 | 1.0 | 1.0 | 1.0 |

| 4 | NaN | 1.0 | 1.0 | 1.0 | 1.0 |

pd.concat([df1, df2], axis=0, join='inner', ignore_index=True) |

| b | c | d | |

|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 |

| 3 | 1.0 | 1.0 | 1.0 |

| 4 | 1.0 | 1.0 | 1.0 |

| 5 | 1.0 | 1.0 | 1.0 |

pd.concat([df1, df2], axis=1, join_axes=[df1.index]) # 依照 axes 合并 |

| a | b | c | d | b | c | d | e | |

|---|---|---|---|---|---|---|---|---|

| 1 | 0.0 | 0.0 | 0.0 | 0.0 | NaN | NaN | NaN | NaN |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 | 1.0 | 1.0 | 1.0 |

| 3 | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 | 1.0 | 1.0 | 1.0 |

添加数据

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d']) |

df1.append(df2, ignore_index=True) |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 1.0 | 1.0 | 1.0 | 1.0 |

| 4 | 1.0 | 1.0 | 1.0 | 1.0 |

| 5 | 1.0 | 1.0 | 1.0 | 1.0 |

df1.append(df2, ignore_index=True) |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 1.0 | 1.0 | 1.0 | 1.0 |

| 4 | 1.0 | 1.0 | 1.0 | 1.0 |

| 5 | 1.0 | 1.0 | 1.0 | 1.0 |

df1.append([df2, df3], ignore_index=True) |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 1.0 | 1.0 | 1.0 | 1.0 |

| 4 | 1.0 | 1.0 | 1.0 | 1.0 |

| 5 | 1.0 | 1.0 | 1.0 | 1.0 |

| 6 | 3.0 | 3.0 | 3.0 | 3.0 |

| 7 | 3.0 | 3.0 | 3.0 | 3.0 |

| 8 | 3.0 | 3.0 | 3.0 | 3.0 |

df1.append(s1, ignore_index=True) |

| a | b | c | d | |

|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 1.0 | 2.0 | 3.0 | 4.0 |

索引合并

left = pd.DataFrame({'key': ['K0', 'K1', 'K2', 'K3'], |

key A B

0 K0 A0 B0

1 K1 A1 B1

2 K2 A2 B2

3 K3 A3 B3

key C D

0 K0 C0 D0

1 K1 C1 D1

2 K2 C2 D2

3 K3 C3 D3

pd.merge(left, right, on='key') |

| key | A | B | C | D | |

|---|---|---|---|---|---|

| 0 | K0 | A0 | B0 | C0 | D0 |

| 1 | K1 | A1 | B1 | C1 | D1 |

| 2 | K2 | A2 | B2 | C2 | D2 |

| 3 | K3 | A3 | B3 | C3 | D3 |

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'], |

key1 key2 A B

0 K0 K0 A0 B0

1 K0 K1 A1 B1

2 K1 K0 A2 B2

3 K2 K1 A3 B3

key1 key2 C D

0 K0 K0 C0 D0

1 K1 K0 C1 D1

2 K1 K0 C2 D2

3 K2 K0 C3 D3

#依据key1与key2 columns进行合并,并打印出四种结果['left', 'right', 'outer', 'inner'] |

| key1 | key2 | A | B | C | D | |

|---|---|---|---|---|---|---|

| 0 | K0 | K0 | A0 | B0 | C0 | D0 |

| 1 | K1 | K0 | A2 | B2 | C1 | D1 |

| 2 | K1 | K0 | A2 | B2 | C2 | D2 |

pd.merge(left, right, on=['key1', 'key2'], how='outer') |

| key1 | key2 | A | B | C | D | |

|---|---|---|---|---|---|---|

| 0 | K0 | K0 | A0 | B0 | C0 | D0 |

| 1 | K0 | K1 | A1 | B1 | NaN | NaN |

| 2 | K1 | K0 | A2 | B2 | C1 | D1 |

| 3 | K1 | K0 | A2 | B2 | C2 | D2 |

| 4 | K2 | K1 | A3 | B3 | NaN | NaN |

| 5 | K2 | K0 | NaN | NaN | C3 | D3 |

pd.merge(left, right, on=['key1', 'key2'], how='left') |

| key1 | key2 | A | B | C | D | |

|---|---|---|---|---|---|---|

| 0 | K0 | K0 | A0 | B0 | C0 | D0 |

| 1 | K0 | K1 | A1 | B1 | NaN | NaN |

| 2 | K1 | K0 | A2 | B2 | C1 | D1 |

| 3 | K1 | K0 | A2 | B2 | C2 | D2 |

| 4 | K2 | K1 | A3 | B3 | NaN | NaN |

pd.merge(left, right, on=['key1', 'key2'], how='right') |

| key1 | key2 | A | B | C | D | |

|---|---|---|---|---|---|---|

| 0 | K0 | K0 | A0 | B0 | C0 | D0 |

| 1 | K1 | K0 | A2 | B2 | C1 | D1 |

| 2 | K1 | K0 | A2 | B2 | C2 | D2 |

| 3 | K2 | K0 | NaN | NaN | C3 | D3 |

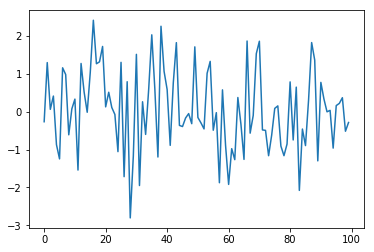

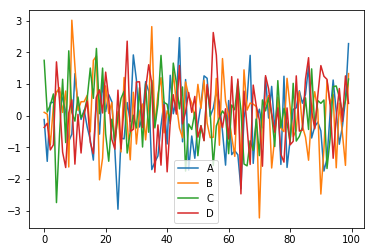

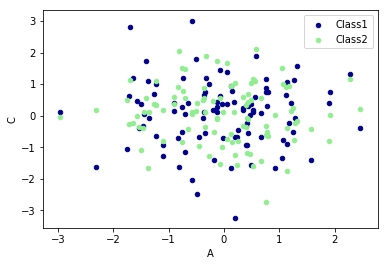

plot 出图

%matplotlib inline |

data = pd.DataFrame( |

ax = data.plot.scatter(x='A',y='B',color='DarkBlue',label='Class1') |

SQL 转 Pandas

import pandas as pd |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 0 | 16.99 | 1.01 | Female | No | Sun | Dinner | 2 |

| 1 | 10.34 | 1.66 | Male | No | Sun | Dinner | 3 |

| 2 | 21.01 | 3.50 | Male | No | Sun | Dinner | 3 |

| 3 | 23.68 | 3.31 | Male | No | Sun | Dinner | 2 |

| 4 | 24.59 | 3.61 | Female | No | Sun | Dinner | 4 |

SELECT total_bill, tip, smoker, time FROM tips LIMIT 5;

tips[["total_bill", "tip", "smoker", "time"]].head(5) |

| total_bill | tip | smoker | time | |

|---|---|---|---|---|

| 0 | 16.99 | 1.01 | No | Dinner |

| 1 | 10.34 | 1.66 | No | Dinner |

| 2 | 21.01 | 3.50 | No | Dinner |

| 3 | 23.68 | 3.31 | No | Dinner |

| 4 | 24.59 | 3.61 | No | Dinner |

SELECT * FROM tips WHERE time = 'Dinner' LIMIT 5;

tips[tips.time == "Dinner"].head(5) |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 0 | 16.99 | 1.01 | Female | No | Sun | Dinner | 2 |

| 1 | 10.34 | 1.66 | Male | No | Sun | Dinner | 3 |

| 2 | 21.01 | 3.50 | Male | No | Sun | Dinner | 3 |

| 3 | 23.68 | 3.31 | Male | No | Sun | Dinner | 2 |

| 4 | 24.59 | 3.61 | Female | No | Sun | Dinner | 4 |

SELECT * FROM tips WHERE time = 'Dinner' AND tip > 5.00;

tips[(tips.time == "Dinner") & (tips.tip > 5.00)] |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 23 | 39.42 | 7.58 | Male | No | Sat | Dinner | 4 |

| 44 | 30.40 | 5.60 | Male | No | Sun | Dinner | 4 |

| 47 | 32.40 | 6.00 | Male | No | Sun | Dinner | 4 |

| 52 | 34.81 | 5.20 | Female | No | Sun | Dinner | 4 |

| 59 | 48.27 | 6.73 | Male | No | Sat | Dinner | 4 |

| 116 | 29.93 | 5.07 | Male | No | Sun | Dinner | 4 |

| 155 | 29.85 | 5.14 | Female | No | Sun | Dinner | 5 |

| 170 | 50.81 | 10.00 | Male | Yes | Sat | Dinner | 3 |

| 172 | 7.25 | 5.15 | Male | Yes | Sun | Dinner | 2 |

| 181 | 23.33 | 5.65 | Male | Yes | Sun | Dinner | 2 |

| 183 | 23.17 | 6.50 | Male | Yes | Sun | Dinner | 4 |

| 211 | 25.89 | 5.16 | Male | Yes | Sat | Dinner | 4 |

| 212 | 48.33 | 9.00 | Male | No | Sat | Dinner | 4 |

| 214 | 28.17 | 6.50 | Female | Yes | Sat | Dinner | 3 |

| 239 | 29.03 | 5.92 | Male | No | Sat | Dinner | 3 |

SELECT * FROM tips WHERE size >= 5 OR total_bill > 45;

tips[(tips.size >= 5) | (tips.total_bill > 45)] |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 0 | 16.99 | 1.01 | Female | No | Sun | Dinner | 2 |

| 1 | 10.34 | 1.66 | Male | No | Sun | Dinner | 3 |

| 2 | 21.01 | 3.50 | Male | No | Sun | Dinner | 3 |

| 3 | 23.68 | 3.31 | Male | No | Sun | Dinner | 2 |

| 4 | 24.59 | 3.61 | Female | No | Sun | Dinner | 4 |

| 5 | 25.29 | 4.71 | Male | No | Sun | Dinner | 4 |

| 6 | 8.77 | 2.00 | Male | No | Sun | Dinner | 2 |

| 7 | 26.88 | 3.12 | Male | No | Sun | Dinner | 4 |

| 8 | 15.04 | 1.96 | Male | No | Sun | Dinner | 2 |

| 9 | 14.78 | 3.23 | Male | No | Sun | Dinner | 2 |

| 10 | 10.27 | 1.71 | Male | No | Sun | Dinner | 2 |

| 11 | 35.26 | 5.00 | Female | No | Sun | Dinner | 4 |

| 12 | 15.42 | 1.57 | Male | No | Sun | Dinner | 2 |

| 13 | 18.43 | 3.00 | Male | No | Sun | Dinner | 4 |

| 14 | 14.83 | 3.02 | Female | No | Sun | Dinner | 2 |

| 15 | 21.58 | 3.92 | Male | No | Sun | Dinner | 2 |

| 16 | 10.33 | 1.67 | Female | No | Sun | Dinner | 3 |

| 17 | 16.29 | 3.71 | Male | No | Sun | Dinner | 3 |

| 18 | 16.97 | 3.50 | Female | No | Sun | Dinner | 3 |

| 19 | 20.65 | 3.35 | Male | No | Sat | Dinner | 3 |

| 20 | 17.92 | 4.08 | Male | No | Sat | Dinner | 2 |

| 21 | 20.29 | 2.75 | Female | No | Sat | Dinner | 2 |

| 22 | 15.77 | 2.23 | Female | No | Sat | Dinner | 2 |

| 23 | 39.42 | 7.58 | Male | No | Sat | Dinner | 4 |

| 24 | 19.82 | 3.18 | Male | No | Sat | Dinner | 2 |

| 25 | 17.81 | 2.34 | Male | No | Sat | Dinner | 4 |

| 26 | 13.37 | 2.00 | Male | No | Sat | Dinner | 2 |

| 27 | 12.69 | 2.00 | Male | No | Sat | Dinner | 2 |

| 28 | 21.70 | 4.30 | Male | No | Sat | Dinner | 2 |

| 29 | 19.65 | 3.00 | Female | No | Sat | Dinner | 2 |

| … | … | … | … | … | … | … | … |

| 214 | 28.17 | 6.50 | Female | Yes | Sat | Dinner | 3 |

| 215 | 12.90 | 1.10 | Female | Yes | Sat | Dinner | 2 |

| 216 | 28.15 | 3.00 | Male | Yes | Sat | Dinner | 5 |

| 217 | 11.59 | 1.50 | Male | Yes | Sat | Dinner | 2 |

| 218 | 7.74 | 1.44 | Male | Yes | Sat | Dinner | 2 |

| 219 | 30.14 | 3.09 | Female | Yes | Sat | Dinner | 4 |

| 220 | 12.16 | 2.20 | Male | Yes | Fri | Lunch | 2 |

| 221 | 13.42 | 3.48 | Female | Yes | Fri | Lunch | 2 |

| 222 | 8.58 | 1.92 | Male | Yes | Fri | Lunch | 1 |

| 223 | 15.98 | 3.00 | Female | No | Fri | Lunch | 3 |

| 224 | 13.42 | 1.58 | Male | Yes | Fri | Lunch | 2 |

| 225 | 16.27 | 2.50 | Female | Yes | Fri | Lunch | 2 |

| 226 | 10.09 | 2.00 | Female | Yes | Fri | Lunch | 2 |

| 227 | 20.45 | 3.00 | Male | No | Sat | Dinner | 4 |

| 228 | 13.28 | 2.72 | Male | No | Sat | Dinner | 2 |

| 229 | 22.12 | 2.88 | Female | Yes | Sat | Dinner | 2 |

| 230 | 24.01 | 2.00 | Male | Yes | Sat | Dinner | 4 |

| 231 | 15.69 | 3.00 | Male | Yes | Sat | Dinner | 3 |

| 232 | 11.61 | 3.39 | Male | No | Sat | Dinner | 2 |

| 233 | 10.77 | 1.47 | Male | No | Sat | Dinner | 2 |

| 234 | 15.53 | 3.00 | Male | Yes | Sat | Dinner | 2 |

| 235 | 10.07 | 1.25 | Male | No | Sat | Dinner | 2 |

| 236 | 12.60 | 1.00 | Male | Yes | Sat | Dinner | 2 |

| 237 | 32.83 | 1.17 | Male | Yes | Sat | Dinner | 2 |

| 238 | 35.83 | 4.67 | Female | No | Sat | Dinner | 3 |

| 239 | 29.03 | 5.92 | Male | No | Sat | Dinner | 3 |

| 240 | 27.18 | 2.00 | Female | Yes | Sat | Dinner | 2 |

| 241 | 22.67 | 2.00 | Male | Yes | Sat | Dinner | 2 |

| 242 | 17.82 | 1.75 | Male | No | Sat | Dinner | 2 |

| 243 | 18.78 | 3.00 | Female | No | Thur | Dinner | 2 |

244 rows × 7 columns

frame = pd.DataFrame({'col1': ['A', 'B', np.NaN, 'C', 'D'],'col2': ['F', np.NaN, 'G', 'H', 'I']}) |

| col1 | col2 | |

|---|---|---|

| 0 | A | F |

| 1 | B | NaN |

| 2 | NaN | G |

| 3 | C | H |

| 4 | D | I |

SELECT * FROM frame WHERE col2 IS NULL;

frame[frame.col2.isna()] |

| col1 | col2 | |

|---|---|---|

| 1 | B | NaN |

SELECT * FROM frame WHERE col1 IS NOT NULL;

frame[frame['col1'].notna()] |

| col1 | col2 | |

|---|---|---|

| 0 | A | F |

| 1 | B | NaN |

| 3 | C | H |

| 4 | D | I |

frame[frame.col2.isna()] |

| col1 | col2 | |

|---|---|---|

| 1 | B | NaN |

SELECT sex, count(*) FROM tips GROUP BY sex;

tips.groupby('sex').size() |

sex

Female 87

Male 157

dtype: int64

tips.groupby('sex').count() # 返回每一列not null的记录个数 |

| total_bill | tip | smoker | day | time | size | |

|---|---|---|---|---|---|---|

| sex | ||||||

| Female | 87 | 87 | 87 | 87 | 87 | 87 |

| Male | 157 | 157 | 157 | 157 | 157 | 157 |

SELECT day, AVG(tip), COUNT(*) FROM tips GROUP BY day;

tips.groupby('day').agg({'tip': np.mean, 'day': np.size}) |

| tip | day | |

|---|---|---|

| day | ||

| Fri | 2.734737 | 19 |

| Sat | 2.993103 | 87 |

| Sun | 3.255132 | 76 |

| Thur | 2.771452 | 62 |

SELECT smoker, day, COUNT(*), AVG(tip) FROM tips GROUP BY smoker, day;

df1 = pd.DataFrame({'key': ['A', 'B', 'C', 'D'],'value': np.random.randn(4)}) |

| key | value | |

|---|---|---|

| 0 | A | 1.817347 |

| 1 | B | 0.012256 |

| 2 | C | -1.499646 |

| 3 | D | 1.707255 |

SELECT * FROM df1 INNER JOIN df2 ON df1.key = df2.key;

pd.merge(df1, df2, on='key') |

| key | value_x | value_y | |

|---|---|---|---|

| 0 | B | 0.012256 | 0.061214 |

| 1 | D | 1.707255 | 0.212460 |

| 2 | D | 1.707255 | 1.013015 |

indexed_df2 = df2.set_index('key') |

| key | value_x | value_y | |

|---|---|---|---|

| 1 | B | 0.012256 | 0.061214 |

| 3 | D | 1.707255 | 0.212460 |

| 3 | D | 1.707255 | 1.013015 |

SELECT * FROM df1 RIGHT OUTER JOIN df2 ON df1.key = df2.key;

pd.merge(df1, df2, on='key', how='right') |

| key | value_x | value_y | |

|---|---|---|---|

| 0 | B | 0.012256 | 0.061214 |

| 1 | D | 1.707255 | 0.212460 |

| 2 | D | 1.707255 | 1.013015 |

| 3 | E | NaN | 0.805498 |

SELECT * FROM df1 FULL OUTER JOIN df2 ON df1.key = df2.key;

pd.merge(df1, df2, on='key', how='outer') |

| key | value_x | value_y | |

|---|---|---|---|

| 0 | A | 1.817347 | NaN |

| 1 | B | 0.012256 | 0.061214 |

| 2 | C | -1.499646 | NaN |

| 3 | D | 1.707255 | 0.212460 |

| 4 | D | 1.707255 | 1.013015 |

| 5 | E | NaN | 0.805498 |

df1 = pd.DataFrame({'city': ['Chicago', 'San Francisco', 'New York City'], 'rank': range(1, 4)}) |

| city | rank | |

|---|---|---|

| 0 | Chicago | 1 |

| 1 | San Francisco | 2 |

| 2 | New York City | 3 |

SELECT city, rank FROM df1 UNION ALL SELECT city, rank FROM df2;

pd.concat([df1, df2]) |

| city | rank | |

|---|---|---|

| 0 | Chicago | 1 |

| 1 | San Francisco | 2 |

| 2 | New York City | 3 |

| 0 | Chicago | 1 |

| 1 | Boston | 4 |

| 2 | Los Angeles | 5 |

SELECT city, rank FROM df1 UNION SELECT city, rank FROM df2;

pd.concat([df1, df2]).drop_duplicates() |

| city | rank | |

|---|---|---|

| 0 | Chicago | 1 |

| 1 | San Francisco | 2 |

| 2 | New York City | 3 |

| 1 | Boston | 4 |

| 2 | Los Angeles | 5 |

SELECT * FROM tips ORDER BY tip DESC LIMIT 10 OFFSET 5;

tips.nlargest(10+5, columns='tip').tail(10) |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 183 | 23.17 | 6.50 | Male | Yes | Sun | Dinner | 4 |

| 214 | 28.17 | 6.50 | Female | Yes | Sat | Dinner | 3 |

| 47 | 32.40 | 6.00 | Male | No | Sun | Dinner | 4 |

| 239 | 29.03 | 5.92 | Male | No | Sat | Dinner | 3 |

| 88 | 24.71 | 5.85 | Male | No | Thur | Lunch | 2 |

| 181 | 23.33 | 5.65 | Male | Yes | Sun | Dinner | 2 |

| 44 | 30.40 | 5.60 | Male | No | Sun | Dinner | 4 |

| 52 | 34.81 | 5.20 | Female | No | Sun | Dinner | 4 |

| 85 | 34.83 | 5.17 | Female | No | Thur | Lunch | 4 |

| 211 | 25.89 | 5.16 | Male | Yes | Sat | Dinner | 4 |

SELECT * FROM (

SELECT

t.*,

ROW_NUMBER() OVER(PARTITION BY day ORDER BY total_bill DESC) AS rn

FROM tips t

)

WHERE rn < 3

ORDER BY day, rn;

tips.assign(rn=tips.sort_values(['total_bill'], ascending=False).groupby(['day']).cumcount() + 1).query('rn < 3').sort_values(['day','rn']) |

| total_bill | tip | sex | smoker | day | time | size | rn | |

|---|---|---|---|---|---|---|---|---|

| 95 | 40.17 | 4.73 | Male | Yes | Fri | Dinner | 4 | 1 |

| 90 | 28.97 | 3.00 | Male | Yes | Fri | Dinner | 2 | 2 |

| 170 | 50.81 | 10.00 | Male | Yes | Sat | Dinner | 3 | 1 |

| 212 | 48.33 | 9.00 | Male | No | Sat | Dinner | 4 | 2 |

| 156 | 48.17 | 5.00 | Male | No | Sun | Dinner | 6 | 1 |

| 182 | 45.35 | 3.50 | Male | Yes | Sun | Dinner | 3 | 2 |

| 197 | 43.11 | 5.00 | Female | Yes | Thur | Lunch | 4 | 1 |

| 142 | 41.19 | 5.00 | Male | No | Thur | Lunch | 5 | 2 |

UPDATE tips SET tip = tip*2 WHERE tip < 2;

tips.loc[(tips['tip'] < 3) & (tips['tip'] > 2) , 'tip'] *= 2 |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 0 | 16.99 | 4.04 | Female | No | Sun | Dinner | 2 |

| 1 | 10.34 | 3.32 | Male | No | Sun | Dinner | 3 |

| 2 | 21.01 | 3.50 | Male | No | Sun | Dinner | 3 |

| 3 | 23.68 | 3.31 | Male | No | Sun | Dinner | 2 |

| 4 | 24.59 | 3.61 | Female | No | Sun | Dinner | 4 |

DELETE FROM tips WHERE tip > 9;

tips = tips.loc[tips['tip'] <= 9] |

| total_bill | tip | sex | smoker | day | time | size | |

|---|---|---|---|---|---|---|---|

| 0 | 16.99 | 4.04 | Female | No | Sun | Dinner | 2 |

| 1 | 10.34 | 3.32 | Male | No | Sun | Dinner | 3 |

| 2 | 21.01 | 3.50 | Male | No | Sun | Dinner | 3 |

| 3 | 23.68 | 3.31 | Male | No | Sun | Dinner | 2 |

| 4 | 24.59 | 3.61 | Female | No | Sun | Dinner | 4 |